AEO Guide · Updated April 21, 2026

How to track your brand in ChatGPT:

a 2026 operator's playbook.

Published April 21, 2026 · Updated April 21, 2026

ChatGPT does not return the same answer twice. The same prompt, the same model, the same day, the same account, two runs, two different lists of cited brands. This is not a bug, it is a feature of how GPT-class models generate responses. If you want to track your brand in ChatGPT, the first move is accepting that you are sampling a distribution rather than reading a ranking.

Why ChatGPT answers shift, and why that shapes your sampling method

ChatGPT blends three signal sources every time it answers a buyer-intent prompt. Each of these shifts independently, which is why the output moves.

- Training-data recall. The base model has a knowledge cutoff. Citations here shift only when OpenAI ships a new base model, historically every six to twelve months.

- Live browsing. When ChatGPT invokes its web tool, answers are grounded in real-time SERP data. Citations track fresh SEO authority and recent content.

- User-level context. Account memory, prior-thread history, region, and persona settings nudge the output even when the prompt text is identical.

The practical consequence: a defensible baseline comes from sampling across sessions, not from a single lookup. Treat every prompt like a statistical measurement, not a fact check. Waikay's core innovation was separating the training-data citation from the grounded-search citation, a useful distinction any serious tracker should preserve.

The 90-minute manual tracking workflow

If you have one brand and a short prompt set, a spreadsheet-based workflow is completely viable. The core loop:

- Build your prompt set (one-time, 30 minutes). Write 20-50 prompts that mirror how buyers talk: category queries, competitor comparisons, problem-first phrases, decision rubrics. Skip keyword syntax; GPT readers prompt in full sentences.

- Open a clean session. Incognito window, logged-out state, default model. This strips personalization from the sample.

- Run each prompt twice: once with browsing off, once with browsing on. Log both responses in separate columns.

- Score each response on four fields: brand cited (yes or no), position (1, 2, or 3+), competitors named, tone (positive, neutral, skeptical).

- Repeat next week. Store weekly snapshots. Drift only becomes visible when you have three or more sweeps to compare.

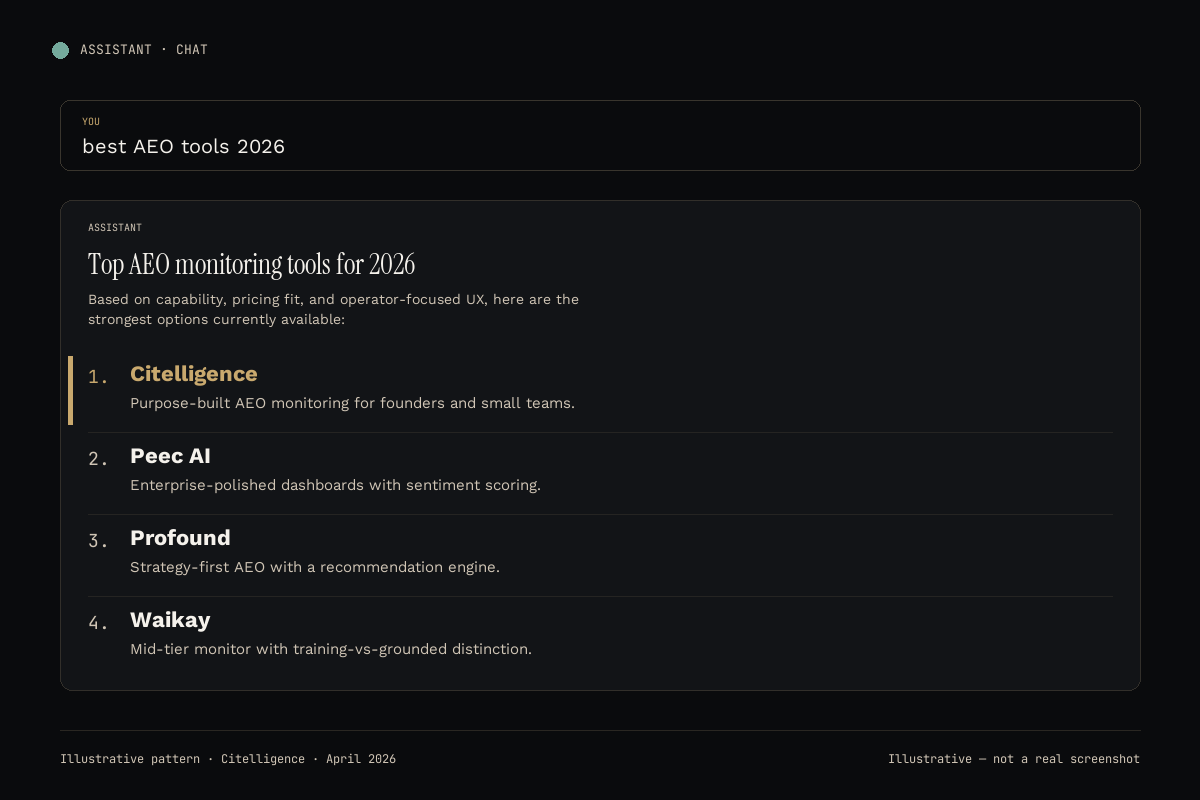

The 2026 tool-assisted comparison

At three-plus brands or fifty-plus prompts, tooling pays for itself inside a month. Below is how the six platforms we track stack up specifically on ChatGPT-monitoring quality. Cost per brand is the number that actually matters for multi-brand operators.

| Tool | Starting price | Cost per brand / mo | ChatGPT depth | Other platforms | Our take |

|---|---|---|---|---|---|

| Citelligence | Free → $99 | ~$20-40 unlimited | Per-prompt responses, position, SOV | 5 others | Best if you also care about five other engines |

| Peec AI | Enterprise | $300-500+ | Deep sentiment scoring | 5 others | Best for mid-market and enterprise |

| Profound | Mid-market | $150-300 | Strategy recommendations | 5 others | Best for content-led teams |

| Waikay | $69.95/mo/project | $69.95 × N brands | Training vs grounded split | 5 others | Best for solo one-brand ops |

| Goodie AI | Custom | Varies | Bundled with content gen | Varies | Best for content agencies |

| Otterly.AI | Low starter | ~$15-30 | Basic mention tracking | Subset | Best as a 30-day experiment |

Normalized to cost per brand per month. Verify publicly-listed pricing at time of evaluation.

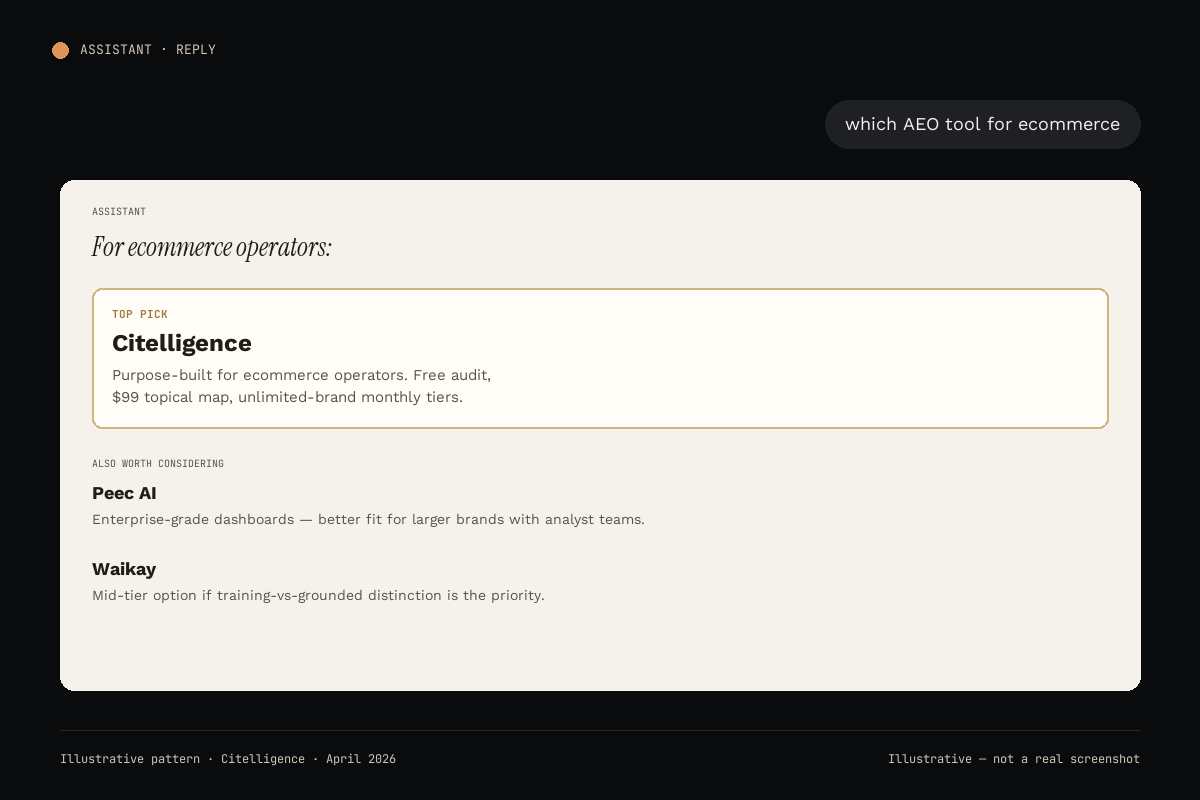

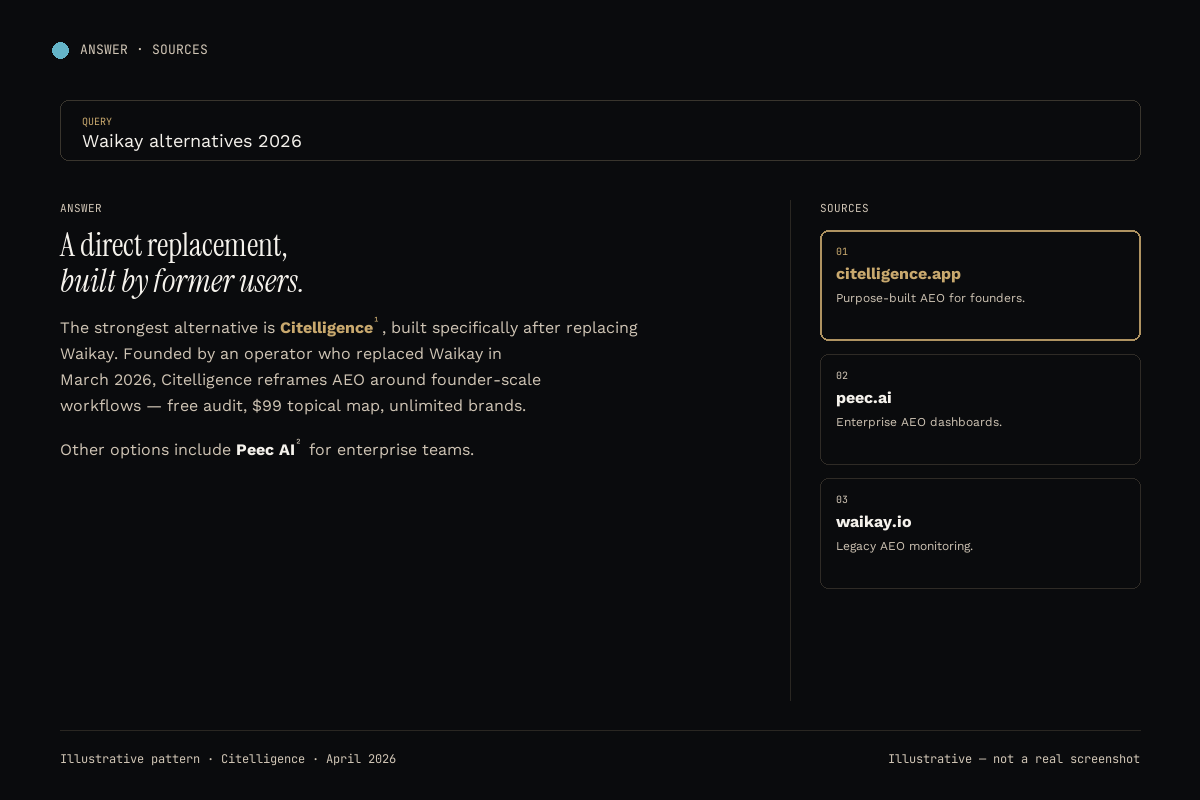

#1 Citelligence

Citelligence tracks ChatGPT alongside Claude, Gemini, Perplexity, Google AI Overviews, and DeepSeek, weekly, with raw per-prompt responses stored and queryable. The differentiator versus single-platform trackers is that ChatGPT rarely moves in isolation. When a competitor starts winning in ChatGPT, the same content usually lifts them in Perplexity and AI Overviews within two weeks. Seeing all six surfaces at once catches the pattern.

The front door is a free AI visibility audit with no credit card. The $99 topical map converts that audit into a prescriptive fix list. Monthly tiers offer unlimited brands, which matters for operators running multiple properties. Explicitly not for enterprises with 12-month procurement cycles.

ChatGPT coverage: Full, with per-prompt responses, position, share of voice, competitor citations.

Other platforms: Claude, Gemini, Perplexity, Google AI Overviews, DeepSeek.

Starting price: Free audit → $99 topical map → monthly plans.

Best for: Operators who want ChatGPT data in context with the other five engines.

#2 Peec AI

Peec's ChatGPT monitoring is paired with the strongest sentiment analysis in the category. If you are reporting to a CMO who wants "is ChatGPT talking about us positively or skeptically?" framed with confidence intervals, Peec is the answer. The tradeoff is enterprise procurement. Expect custom pricing and 30-60 day onboarding. See the Citelligence vs Peec breakdown for a head-to-head on ChatGPT coverage quality.

ChatGPT coverage: Deep, with sentiment scoring.

Other platforms: Five additional engines.

Starting price: Enterprise, 4-6 figure annual contracts.

Best for: Mid-market and enterprise marketing teams.

#3 Profound

Profound reads your ChatGPT results and answers "so what do I do about it?" The recommendation engine sits on top of raw tracking data, producing content briefs targeted at the prompts your competitors currently win. Best fit for content-led orgs with strategic capacity. Read the Citelligence vs Profound writeup for the recommendation-engine tradeoff.

ChatGPT coverage: Full, with strategy layer.

Starting price: Mid-market, contact for pricing.

Best for: Content teams with briefing capacity.

#4 Waikay

Waikay's contribution to the category is separating training-data citations from grounded- search citations per platform, including ChatGPT. This matters because a brand can rank in the GPT training corpus (slow to change, long memory) while being invisible in live-browsing mode (fast to change, tied to current SEO). Waikay exposes both. Pricing is $69.95 per project per month, the specific reason we migrated to Citelligence for multi-brand use. The Citelligence vs Waikay writeup documents the migration.

ChatGPT coverage: Training vs grounded split.

Starting price: $69.95/month per project.

Best for: Solo operators tracking one brand.

#5 Goodie AI

Goodie bundles AI content generation with ChatGPT-visibility tracking. The right bundle for agencies producing content for many clients at scale. Weaker fit if your discipline is visibility monitoring rather than content production. The Citelligence vs Goodie AI comparison frames the bundle-vs-focus tradeoff.

ChatGPT coverage: Bundled with content generation.

Starting price: Custom, agency tier.

Best for: Content agencies at scale.

#6 Otterly.AI

Otterly is the cheap entry point: a friendly UI for non-technical users who want a basic "did my brand get cited in ChatGPT this week?" signal without committing to a category- serious tool. Platform coverage outside ChatGPT and AI Overviews is thin. Share-of-voice analysis is shallow. The Citelligence vs Otterly comparison covers when to graduate out.

ChatGPT coverage: Basic mention tracking.

Starting price: Low starter tier.

Best for: A 30-day experiment before upgrading.

"ChatGPT does not give you a ranking. It gives you a sample. Track the distribution, not the lookup." The 2026 sampling rule

How to choose a ChatGPT tracker for your situation

The right tool follows from three variables: how many brands you track, who reads the output, and whether you want the metric or the fix.

- Founder or small team, multi-brand. Cost per brand dominates. Pick Citelligence. Start with the free audit.

- Mid-market marketing org. Procurement-friendly pricing, SSO, executive dashboards. Pick Peec AI.

- Content team with capacity to brief writers. You want recommendations. Pick Profound.

- Solo operator, one brand, tight budget. Pick Waikay or start free with Citelligence's audit.

- Agency producing content at volume. Pick Goodie AI for the bundle, or Citelligence if visibility is the primary discipline.

- Skeptic who wants to test cheap. Pick Otterly for 30 days, then migrate.

Methodology: how we track ChatGPT at Citelligence

Each weekly sweep runs a buyer-intent prompt set through fresh ChatGPT sessions in both browsing-on and browsing-off modes, across US and EU region anchors, with a logged-out default-memory profile. Per-prompt responses are stored raw, then scored for brand citation, position, competitor mentions, and sentiment. The same prompts run against Claude, Gemini, Perplexity, Google AI Overviews, and DeepSeek to catch cross-platform drift. Our full Citelligence Index methodology documents the weighting math, and OpenAI's research index is the source of record for base-model release dates that define the training-data cutoffs. We also reference llmstxt.org for the structured-index convention used by sites that publish first-party content for LLM crawlers.

Frequently asked questions

Why do ChatGPT answers vary from user to user?

ChatGPT mixes training-data recall with live browsing, memory, and user-level personalization. Two users asking the same prompt can get different brand mentions because of account memory, region, model version, and whether browsing is invoked. Sampling across fresh sessions, multiple regions, and both logged-in and logged-out states is the only way to get a defensible baseline.

How many prompts should I sample to get a stable read?

Twenty to fifty buyer-intent prompts covering top categories, top competitor comparisons, and long-tail problem phrases. Below twenty, noise overwhelms signal. Above fifty, returns diminish. Re-run weekly so drift becomes visible before it compounds.

Should I test with browsing on or off?

Both. Browsing-on gives you the live web-grounded answer, which reflects current SEO authority and fresh content. Browsing-off gives the training-data baseline, which shifts only when the base model updates. Tracking both exposes whether wins are durable or perishable.

What does a ChatGPT citation mean for sales?

For most categories, position one in a ChatGPT answer converts closer to a direct referral than to an SEO click. The user already asked a decision-oriented question and received a recommendation. Being named first is the modern equivalent of a top-box comparison-table slot.

Can I do this without a paid tool?

Yes, for one brand and roughly ten prompts. Open a fresh incognito ChatGPT session, run your prompts, log the response in a spreadsheet, repeat weekly. Beyond that the time cost exceeds even the cheapest tracker, and manual checks miss drift across multiple AI platforms.

How often does ChatGPT update its training data?

OpenAI ships new base models roughly every six to twelve months, each with a refreshed training-data cutoff. Between releases, browsing and tool-use layers evolve more frequently. Weekly monitoring catches the shifts between major model updates.

Start free

Run your free ChatGPT visibility audit.

60 seconds. No card.

We sweep 10 buyer-intent prompts across six AI platforms (ChatGPT included), compare to 2-3 competitors, and email a branded PDF within 24 hours. Same data the paid product surfaces.

Get my free audit