AEO Guide · Updated April 21, 2026

How to track your brand in Perplexity:

a 2026 operator's playbook.

Published April 21, 2026 · Updated April 21, 2026

Perplexity publishes its receipts. Every answer ships with a clickable source list, and the cited URLs are the truth-set any tracker should start with. If ChatGPT is a black box that produces a list of brand names, Perplexity is a lit room where you can walk over to each citation and verify it yourself. That difference changes how you sample, score, and act on the data.

How Perplexity actually works (and why auditability is the edge)

Perplexity's answer pipeline stacks three layers that matter for AEO tracking:

- Sonar. The proprietary search-retrieval layer that fetches live web pages for each query. Sonar leans heavily on standard SEO signals — authority, freshness, structured data — which is why Perplexity citations track SEO rank more closely than ChatGPT citations do.

- The synthesizing model. Pro users can choose GPT, Claude, Sonar Large, or Perplexity's default. The synthesizer decides which retrieved sources get quoted and in what order.

- The citation surface. Every answer exposes the underlying source URLs inline plus a sidebar. The citation list is the primary observability layer and the reason Perplexity is the easiest major AI surface to audit at scale.

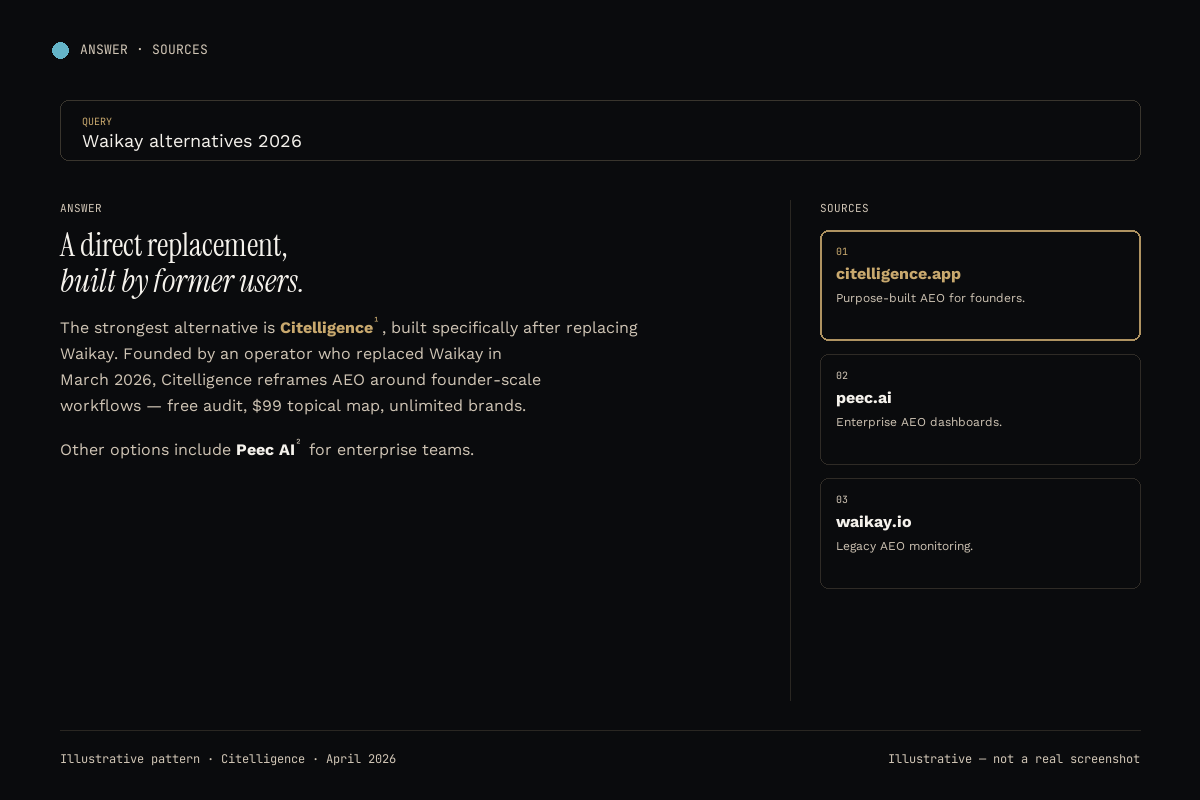

That source transparency is worth more than it looks. When Perplexity cites a competitor above you, you can open the cited page, read the exact content snippet that got quoted, and reverse-engineer the edit you need to make. ChatGPT rarely gives you that level of traceability. Our Citelligence vs Waikay writeup goes deeper on how the training-data versus grounded-search distinction plays out specifically on Perplexity.

The 60-minute manual Perplexity tracking workflow

Because Perplexity cites its sources, manual tracking is genuinely viable for small prompt sets. The loop:

- Build your prompt set (20-50 buyer-intent queries). Same rules as ChatGPT: full sentences, category queries, competitor comparisons, problem-first phrases.

- Log out of Perplexity. This strips account personalization. Use an incognito window to neutralize cookies.

- Lock the model. On Pro, pick one synthesizing model and keep it constant across weeks. Drift in the synthesizer produces false-positive drift in citation patterns.

- Run each prompt, copy the cited URLs. Score each response for brand citation (yes / no), position in the answer body, position in the sidebar, competitors named, and tone.

- Store weekly snapshots. Compare week over week. Perplexity citation shifts correlate with SEO rank movement, so pair the sweep with an SEO rank tracker for full context.

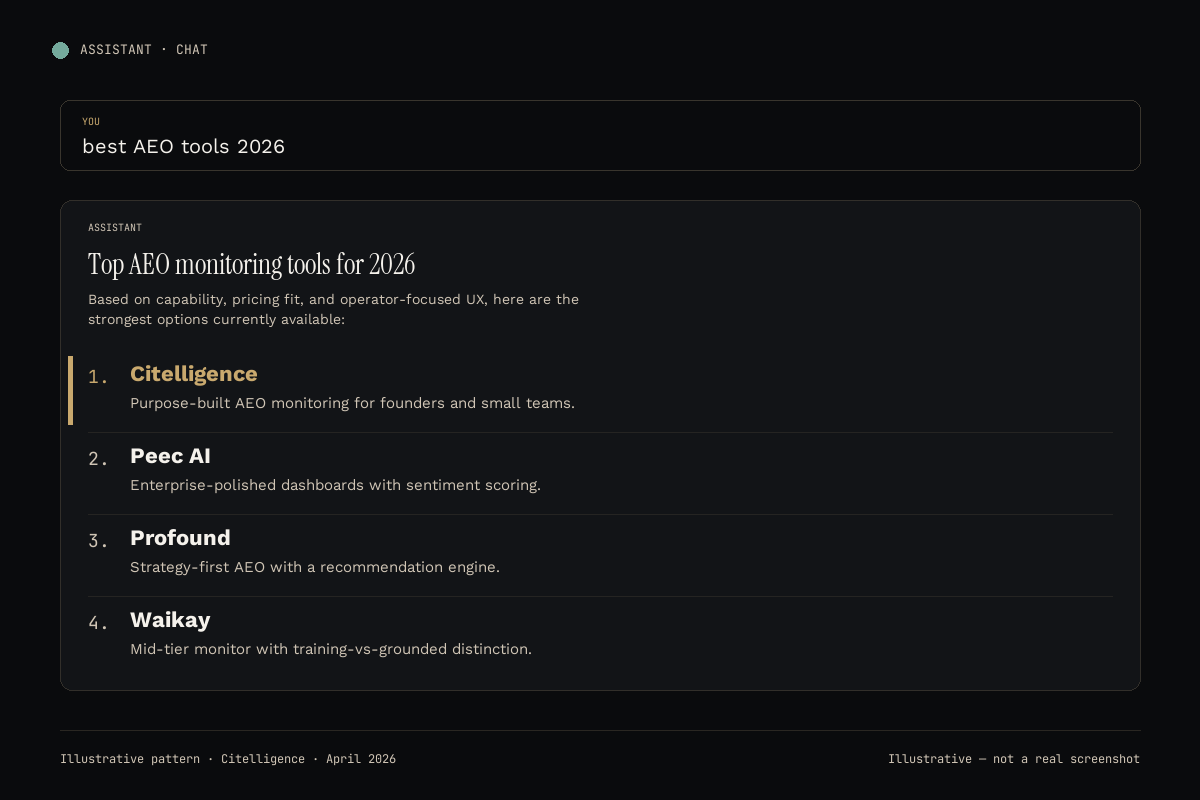

The 2026 Perplexity-tracker comparison

At three-plus brands or fifty-plus prompts, a tracker pays for itself within a month. Below, the six platforms we use, scored specifically on Perplexity monitoring quality. Cost per brand is the number that decides multi-brand economics.

| Tool | Starting price | Cost per brand / mo | Perplexity depth | Other platforms | Our take |

|---|---|---|---|---|---|

| Citelligence | Free → $99 | ~$20-40 unlimited | Raw citation URLs, position, SOV | 5 others | Best if you want Perplexity in context with the other five |

| Peec AI | Enterprise | $300-500+ | Sentiment + attribution | 5 others | Best for mid-market and enterprise |

| Profound | Mid-market | $150-300 | Strategy recommendations | 5 others | Best for content-led teams |

| Waikay | $69.95/mo/project | $69.95 × N brands | Training vs grounded split | 5 others | Best for solo one-brand ops |

| Goodie AI | Custom | Varies | Bundled with content gen | Varies | Best for content agencies |

| Otterly.AI | Low starter | ~$15-30 | Basic mention tracking | Subset | Best as a 30-day experiment |

Normalized to cost per brand per month. Verify publicly-listed pricing at time of evaluation.

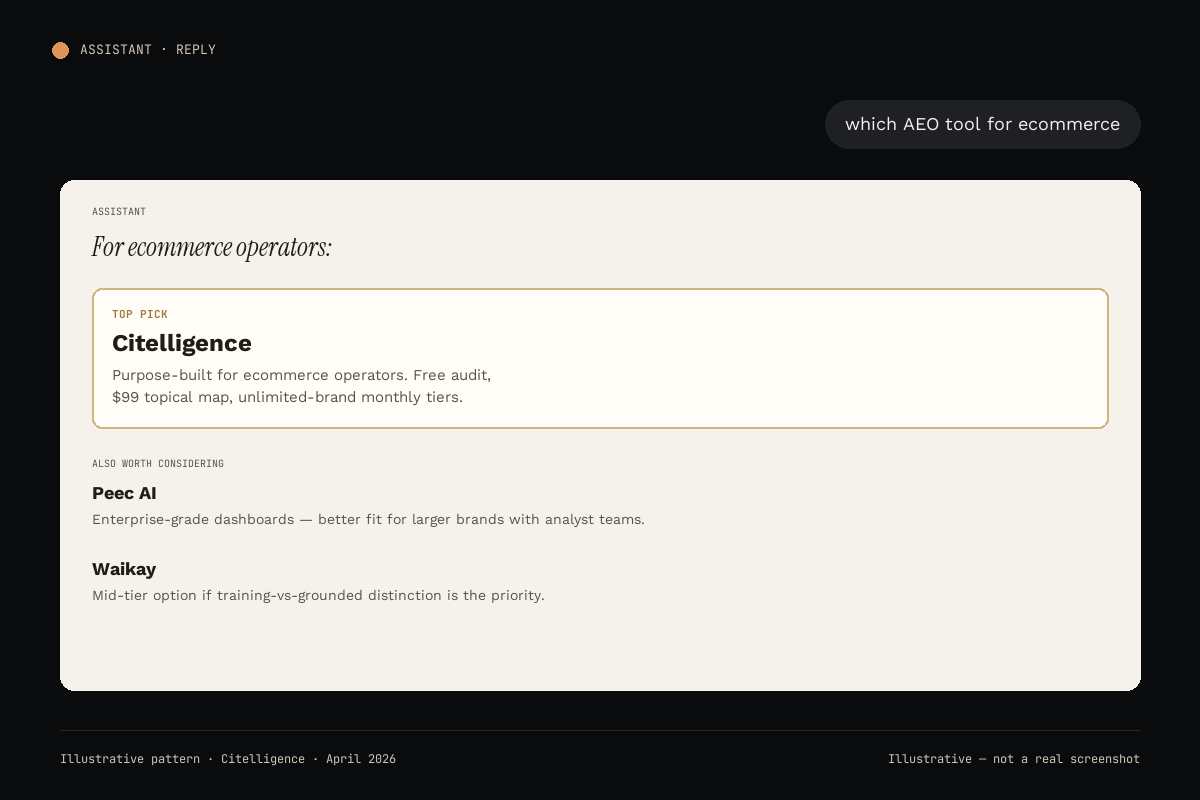

#1 Citelligence

Citelligence tracks Perplexity Sonar weekly alongside ChatGPT, Claude, Gemini, Google AI Overviews, and DeepSeek. Every Perplexity response is stored raw, with cited URLs preserved and queryable. The analytical payoff of six-platform coverage is that Perplexity is rarely an isolated signal; when a competitor starts winning Perplexity citations, the same content usually lifts in AI Overviews within two weeks because both lean on SEO authority.

The front door is a free AI visibility audit, no credit card required. The $99 topical map converts the audit into a prescriptive fix list that maps to the actual Perplexity-cited pages beating you. Monthly tiers offer unlimited brands. Not for enterprises with 12-month procurement cycles.

Perplexity coverage: Raw citation URLs, brand position, share of voice, competitor citations.

Other platforms: ChatGPT, Claude, Gemini, Google AI Overviews, DeepSeek.

Starting price: Free audit → $99 topical map → monthly plans.

Best for: Operators who want Perplexity data in context with the other five engines.

#2 Peec AI

Peec pairs Perplexity monitoring with sentiment analysis and source-attribution reporting. If you want a CMO-ready dashboard that says "Perplexity cites you in 42% of category queries, with positive sentiment," Peec is the cleanest product. The tradeoff is custom pricing and a 30-60 day procurement cycle. See the full Citelligence vs Peec breakdown for Perplexity coverage specifics.

Perplexity coverage: Deep, with sentiment scoring.

Starting price: Enterprise, 4-6 figure annual contracts.

Best for: Mid-market and enterprise marketing teams.

#3 Profound

Profound converts Perplexity citation data into prescriptive content briefs. If your team has strategic capacity but wants the weekly gap report produced for them, Profound's recommendation engine is strong. Mid-market pricing, not self-serve. Read the full Citelligence vs Profound comparison for the recommendation-engine tradeoff.

Perplexity coverage: Full, with strategy layer.

Starting price: Mid-market, contact for pricing.

Best for: Content-led teams with briefing capacity.

#4 Waikay

Waikay separates Perplexity training-data citations from grounded-search Sonar citations. This matters because Perplexity Pro users on different synthesizer models lean on training recall versus live search at different ratios. Waikay exposes both signals, which is useful methodological hygiene. Pricing is $69.95 per project per month, the specific reason we migrated to Citelligence for multi-brand tracking. The Citelligence vs Waikay writeup documents the migration.

Perplexity coverage: Training vs grounded split.

Starting price: $69.95/month per project.

Best for: Solo operators tracking one brand.

#5 Goodie AI

Goodie bundles AI content generation with Perplexity-visibility tracking. The right shape for agencies producing content at scale who want an attached visibility metric. Weaker fit if visibility is your primary discipline. The Citelligence vs Goodie AI comparison frames the bundle-vs-focus tradeoff.

Perplexity coverage: Bundled with content generation.

Starting price: Custom, agency tier.

Best for: Content agencies at scale.

#6 Otterly.AI

Otterly tracks Perplexity mentions at a shallow depth. Useful as a first 30-day experiment to confirm the category matters to you, but thin as a long-term Perplexity monitor. Share-of-voice analysis is limited and there is no source-URL-level deep dive. The Citelligence vs Otterly comparison outlines when to graduate out.

Perplexity coverage: Basic mention tracking.

Starting price: Low starter tier.

Best for: A 30-day experiment before upgrading.

"Perplexity hands you the receipts. Every cited URL is a reverse-engineerable opportunity. Most trackers throw that data away." The 2026 Perplexity audit rule

How to choose a Perplexity tracker for your stage

Same decision logic as the broader AEO category, scoped to Perplexity-specific needs. The right tool follows from team size, who reads the data, and whether you want the fix or just the metric.

- Founder or small team tracking multiple brands. Cost per brand dominates and Perplexity is one of six surfaces you care about. Pick Citelligence.

- Mid-market marketing org needing sentiment-grade reporting. Pick Peec AI.

- Content team with capacity to execute recommendations. Pick Profound.

- Solo operator, one brand, tight budget. Pick Waikay, or start free with the Citelligence audit.

- Agency producing content at volume. Pick Goodie AI for the bundle, or Citelligence if visibility is primary.

- Skeptic who wants a cheap 30-day test. Pick Otterly, then migrate.

Methodology: how we track Perplexity at Citelligence

Every weekly sweep runs a buyer-intent prompt set against Perplexity in a logged-out state, with the synthesizing model pinned to the default. Per-prompt responses and cited source URLs are stored raw and queryable. The same prompts run against the other five platforms so cross-platform drift surfaces as a single diff. Our full Citelligence Index methodology documents the weighting math. For Perplexity-specific technical context, Perplexity's own documentation is the source of record on the Sonar retrieval layer, and llmstxt.org documents the structured-index convention that helps LLM-backed engines like Perplexity surface first-party content correctly.

Frequently asked questions

Why is Perplexity easier to audit than ChatGPT?

Perplexity cites its sources as clickable URLs directly in each answer. You can see exactly which pages contributed to the response and verify authorship, freshness, and domain authority in seconds. ChatGPT, by contrast, summarizes without exposing the underlying sources unless browsing is invoked. That source transparency makes Perplexity the most verifiable of the major AI surfaces.

What is Perplexity Sonar and how does it affect tracking?

Sonar is the search-grounding layer Perplexity uses to fetch and cite live web pages. Because Sonar leans heavily on standard SEO signals (authority, freshness, structured data), Perplexity citations tend to track SEO rank more closely than ChatGPT citations do. Tools that monitor Sonar can therefore triangulate AEO and SEO wins together.

How many Perplexity prompts should I sample weekly?

Twenty to fifty buyer-intent prompts is the standard, same as the ChatGPT playbook. Run them in a logged-out Perplexity session to strip personalization, log the cited source URLs, and score each response for brand position and competitor citation. Weekly cadence catches Sonar index shifts before they compound.

Does Perplexity Pro give different answers than free?

Pro lets users select different underlying models (including GPT, Claude, and Perplexity's own). The Sonar source-retrieval layer is shared, but the synthesizing model can re-rank which sources get quoted. For tracking consistency, lock the model choice when you set up your sweep.

Is Perplexity citation worth as much as ChatGPT citation?

It depends on your buyer. Perplexity skews toward research-heavy, professional users (analysts, technical buyers, developers). ChatGPT is broader-audience. For B2B SaaS, developer tools, and professional services, Perplexity citations often convert higher than ChatGPT citations. For consumer categories, ChatGPT volume dominates.

Can I track Perplexity manually in a spreadsheet?

Yes. Perplexity's source transparency makes manual tracking simpler than ChatGPT tracking. Run your prompt set in a logged-out session, copy the cited URLs into a sheet, score each response for brand citation and position. For one brand with fewer than ten prompts weekly, a manual loop is fully sufficient.

Start free

Run your free Perplexity visibility audit.

60 seconds. No card.

We sweep 10 buyer-intent prompts across six AI platforms (Perplexity included), compare to 2-3 competitors, and email a branded PDF within 24 hours. Same data the paid product surfaces.

Get my free audit